How to Lead Self-Improving AI Systems: Leadership Strategy Guide

Most leaders are preparing for better tools. The real shift is toward supervising improving systems.

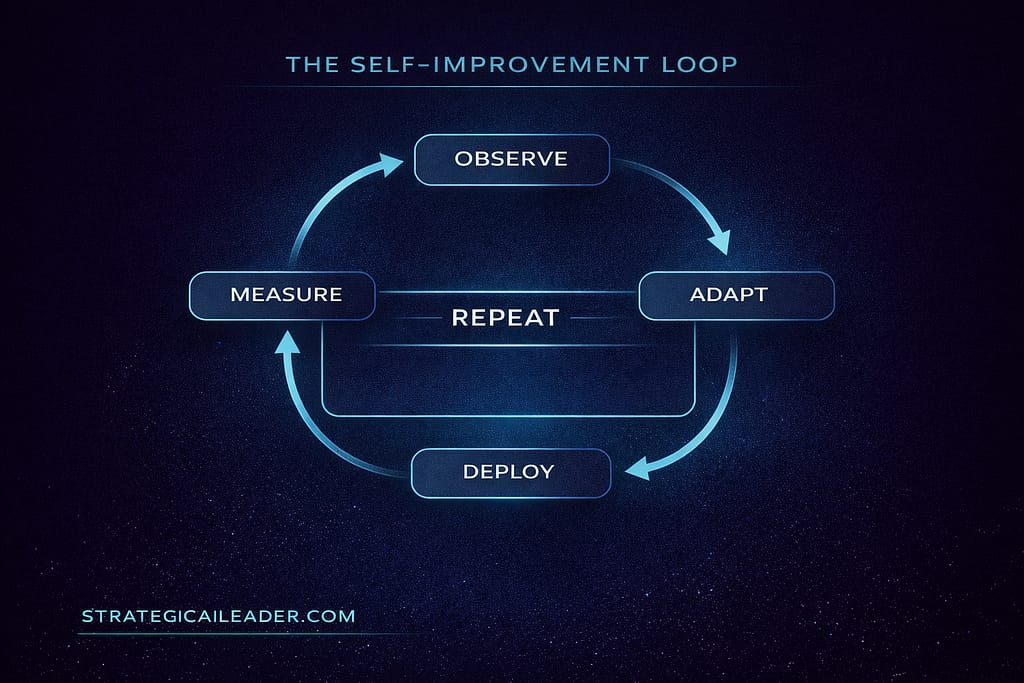

| What Are Self-Improving AI Systems? Self-improving AI systems are systems that modify their own outputs over time through evaluation loops, reinforcement pipelines, environment feedback, tool use, and code generation instead of one-time static training alone. |

Self-improving systems differ from periodically retrained models because improvement occurs inside the operating workflow, not only between training releases.

That is the definition. Keep it in scope. This article is not about AGI. It is not about machine consciousness. It is about production systems that already exist, already iterate, and already change their own behavior faster than most leadership teams track.

Most writing about self-improving AI focuses on the technical pipeline: how models are trained, evaluated, and updated. This article focuses on the leadership pipeline: what changes about how operators and executives manage systems that improve faster than teams adapt.

That gap is where most organizations are currently sitting.

The Problem Is Not Intelligence. It Is Architecture.

I expected the hardest part of deploying AI systems to be the capability gap. It wasn’t. The hardest part was realizing that our oversight layer had not moved at all while the systems underneath it kept changing.

Most leaders have deployed AI tools and are seeing real task-level productivity gains. People are writing faster, analyzing data faster, and handling more volume with the same headcount. That part works.

But self-improving AI systems create a different problem. The system you reviewed last quarter is not the same system running today. It has been updated, retrained, or replaced. The evaluation loops within it may have shifted outputs in ways your team has not yet noticed. And the feedback loop that would surface those shifts probably does not exist.

The primary risk of self-improving AI systems is not capability expansion. It is unmanaged improvement loops operating without supervision architecture.

– Richard Naimy

That is the structural gap. And it does not show up in any task-level productivity metric.

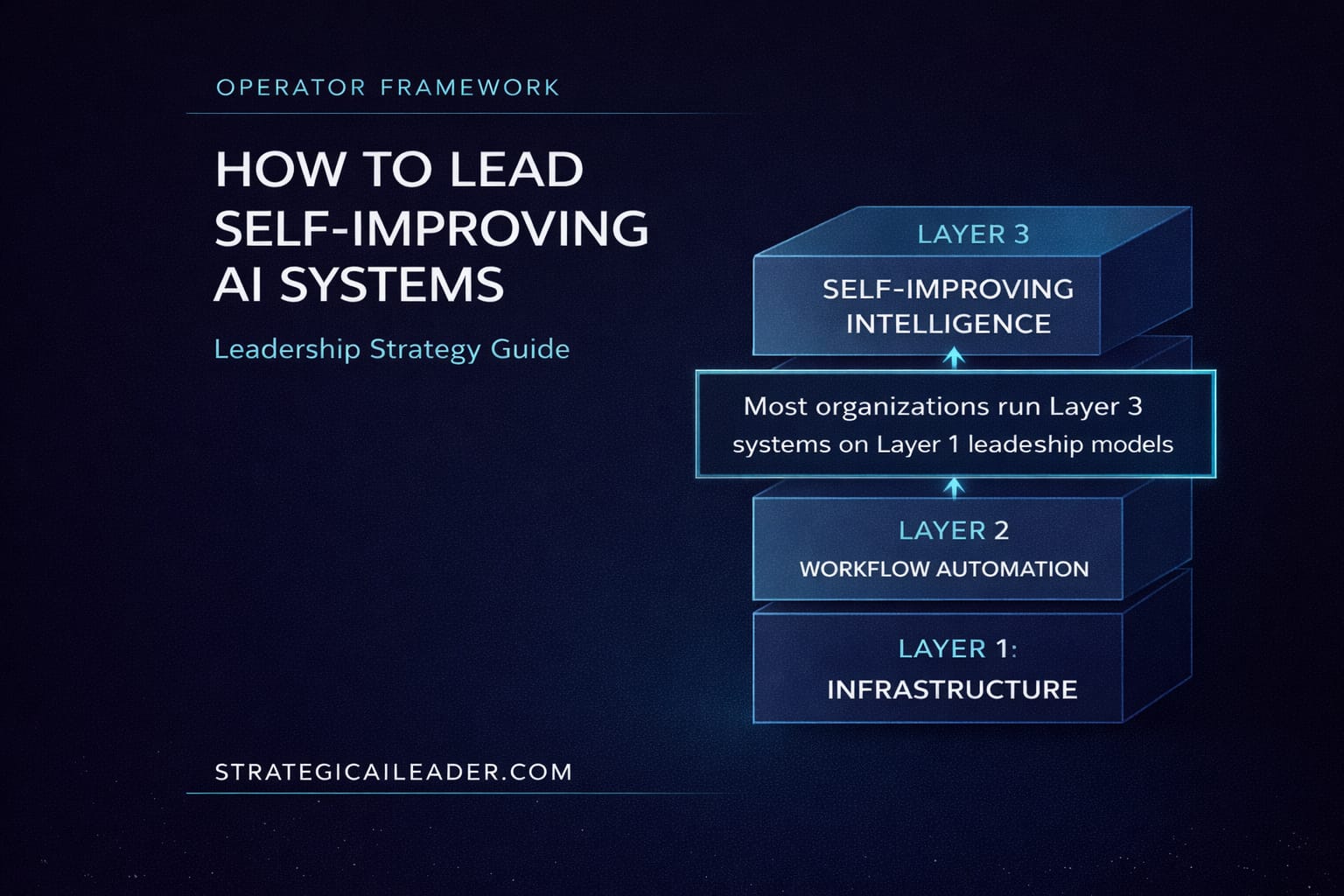

Most Organizations Are Managing Layer 3 Systems With Layer 1 Infrastructure.

What Leaders Get Wrong About This Transition

The most common assumption I encounter is that AI readiness means tool adoption. Get the team using the tools, train them on prompting, and measure time savings. That framing made sense in 2023 – 2024. It does not hold in 2026.

Three other misconceptions compound the problem.

First: capability improvements are treated as features to learn, not system changes to supervise. When a new model version drops, the instinct is to update the training and adjust the prompts. The instinct should be to ask what changed in the output distribution and whether the existing oversight layer still detects those shifts.

Second: governance and oversight are treated as the same function. They are not. Governance is policy. Oversight architecture is the structural layer that automatically enforces policy. Most organizations have governance documents. Almost none have an oversight architecture.

Third: the skills required to supervise improving systems are assumed to be the same skills required to use them. They are not. Using AI requires execution skill. Supervising AI systems requires system design skills. That transition is the one most teams are not making.

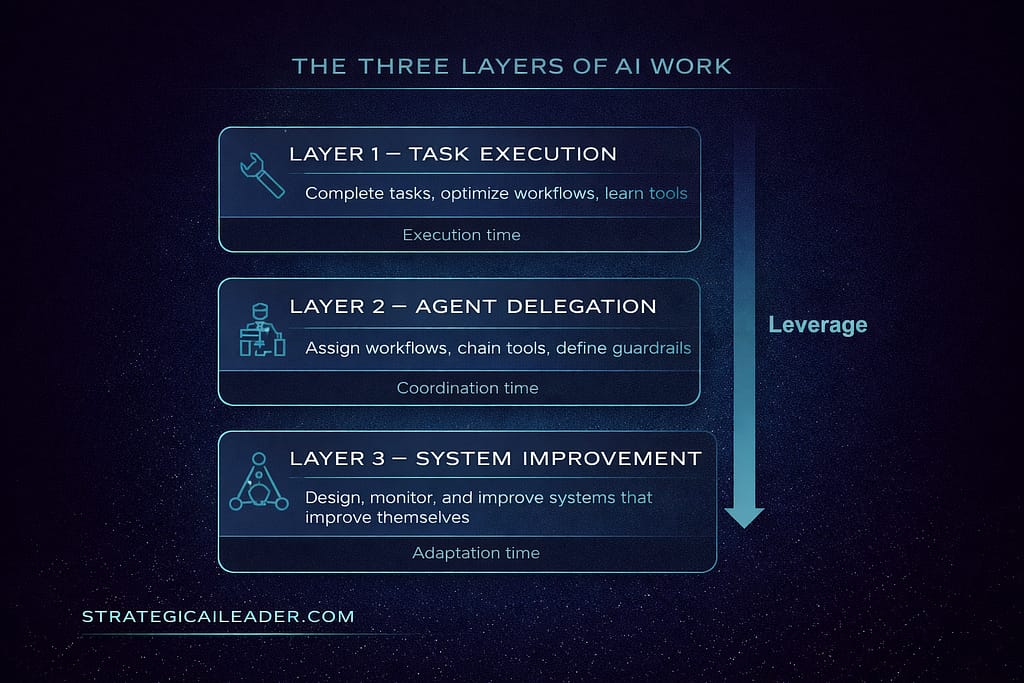

The Three Layers of AI Work

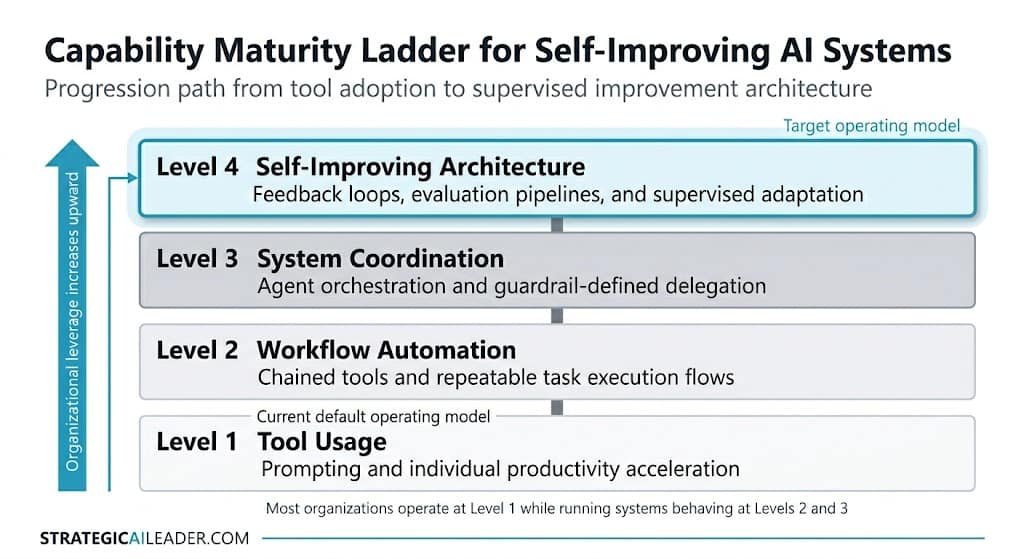

Most organizations are trying to manage Layer 3 systems using Layer 1 habits. The Three Layers of AI Work framework makes that mismatch visible.

The Three Layers of AI Work is a diagnostic framework for understanding operator leverage in AI-enabled environments. It separates three distinct competencies and leverage levels that look similar from the outside but require different skills and different infrastructure.

The Three Layers of AI Work Maturity Model

The leverage arrow runs from top to bottom. Each layer down compresses a different kind of time. Layer 1 compresses execution time. Layer 2 compresses coordination time. Layer 3 compresses adaptation time.

Adaptation time is the variable that self-improving AI systems put at risk. If you don’t have an oversight architecture, every model update forces manual re-adaptation. Your team absorbs the cost each time.

Adaptation time determines whether system improvements compound for your organization or against it.

Which Layer Are You Operating In Today?

This diagnostic is blunt. Read each description and identify the one that matches your current practice, not your ambition.

Layer 1 – Task Execution:

You use AI to complete tasks faster: write code, draft content, and analyze data. You invest in tool training and prompt quality. Speed is the primary metric you track.

Layer 2 – Agent Delegation:

You assign AI workflows across tools, chain agents together, and define guardrails for outputs. You think about delegation architecture. You measure coordination efficiency, not just individual task speed.

Layer 3 – System Improvement:

You design, monitor, and improve the systems that improve themselves. You have defined what gets measured, what thresholds trigger a review, and who owns the feedback loop when outputs shift.

Most operators reading this are at Layer 1. That is not a failure. Layer 1 creates real value. The problem is treating Layer 1 infrastructure as sufficient when the AI systems underneath it are already operating at Layer 2 and Layer 3 behavior.

What the Data Says About the Readiness Gap

The McKinsey State of AI 2025 report found that CEO-level oversight of AI governance correlates with higher Earnings Before Interest and Taxes (EBIT) impact from generative AI, with 28% of respondents reporting the CEO as responsible for AI governance. Organizations where oversight sits at the executive level generate measurably better returns. The constraint is not access to tools. It is the structural placement of the supervision function.

| EBIT (Earnings Before Interest and Taxes) measures how much operating profit a company generates from its core business before financing costs and taxes. In this context, a higher EBIT impact means organizations are not only saving time with AI, but they are also improving underlying operating performance. |

McKinsey’s MGI research on agents and automation found that job listings increasingly seek skills in process optimization, quality assurance, and teaching AI systems. Those are Layer 2 and Layer 3 skills. Listings for writing and research skills are declining. The labor market is already pricing in the transition.

The California Management Review’s AI Governance Maturity Matrix found that 45% of firms have not put AI on the board’s agenda at all, and only 14% of boards regularly discuss AI. Six of the top 50 US companies by market cap have directors with AI backgrounds. Governance awareness exists. Supervision architecture usually does not.

These are not fringe signals. They are converging data points that point to the same gap: organizations have adopted AI tools at Layer 1, while the systems they run have moved to Layer 2 and Layer 3 behavior.

The operational gap compounds quietly. Major model providers now release updates on cycles measured in weeks rather than quarters. Evaluation loop latency, the time between an output change and an operator detecting it, typically runs from days to weeks in organizations without oversight architecture in place. By the time drift is visible in outcomes, it has usually been running for weeks inside the workflow.

Two Operators. Same AI System. Different Architecture.

Consider two operators inside the same company, both using the same AI-assisted workflow tool.

Operator A built their work around Layer 1. They became the most capable person on the team at using the tools. When the underlying model updated, they adapted, retested prompts, corrected drift, and updated their process notes. They were good at it. Fast, even. But the adaptation cost never went away. Every update cycle pulled them back to the same work: finding what changed, testing what broke, rebuilding confidence in the output. They were managing the system by living inside it.

Operator B started by trying to do the same thing. First attempt at defining quality criteria was too vague; “accurate and professional” is not a threshold, it is a preference. The first feedback loop they built flagged too much: every minor variation in tone triggered a review, and the team started ignoring the alerts within two weeks. They rebuilt it twice before it worked. The version that was used held three specific output dimensions with numerical bounds, checked against a sample set they had validated before deployment.

When the model updated three months in, the feedback loop caught a shift in citation formatting that would have taken Operator A several days of manual spot-checking to find. Operator B’s team fixed it in an afternoon. Not because they were smarter or faster. Because they had built the layer that absorbed the update instead of passing the cost to the operator.

I built something closer to Operator B’s second attempt on the first try — the one that flagged too much. The alerts were noise. The team routed around them. I spent more time debugging the monitoring layer than I had spent building the workflow it was supposed to cover. The version that worked required stripping it back to two quality dimensions and one threshold each. Simpler than I expected. More specific than I wanted to bother with. Both were necessary.

What Is Oversight Architecture?

Oversight architecture is the structural system design layer that defines what gets measured, what thresholds trigger a correction, and how improvement loops inside AI systems are governed. It is distinct from governance policy. Policy says what should happen. Oversight architecture enforces it automatically. If you have deployed decision infrastructure to make AI execution reliable, oversight architecture is the monitoring and correction layer that sits above it.

System supervision is the operator practice that runs inside that architecture. It is what you actually do: monitoring, reviewing, correcting, and improving systems that iterate on their own outputs. Oversight architecture is the structure. System supervision is the behavior.

Most AI governance frameworks address oversight architecture as a compliance function. That framing underestimates it. Oversight architecture is a performance variable. The McKinsey data confirms this. Organizations with executive-level AI oversight generate better outcomes. Not because compliance is valuable, but because supervision architecture compresses the feedback loop between system behavior and corrective action.

The Knowledge Identity Shift

There is a quieter disruption inside this transition. For most of the last decade, expertise meant knowing more. The expert in the room was the person with the most accumulated answers. Knowledge was the asset.

Self-improving systems compress that advantage faster than most experts expect. When the system surfaces relevant knowledge in seconds, the value of knowing shifts toward the value of designing. Old model: execution creates status. New model: system judgment does.

This is the Knowledge Identity Shift. Operators who adapt stop curating answers and start designing the systems that generate them. That transition is harder than it sounds. It took me longer than I expected to make it.

What Layer 3 Leadership Actually Requires

The Fortune Supervisor Class piece from March 2026 documented how Salesforce support agents now handle 96% of cases autonomously, saving over 50,000 seller hours. The article framed it as a developer skill transition. That framing is half right. The deeper point is architectural: Salesforce did not get there by having employees use AI faster. They got there by building leadership decision loops that define the delegation architecture. That is the move from Layer 1 to Layer 2. The move from Layer 2 to Layer 3 is the same structural step, one level up. (Source: Fortune, March 2026)

Layer 3 leadership starts when output quality becomes something you define before deployment instead of something you inspect after failure.

- Output quality definition before deployment. You cannot supervise a system without specifying what good looks like in advance.

- Feedback loop design. Automatic flagging when outputs drift past quality thresholds.

- Variance tracking across model updates. Knowing what changed between versions and whether existing guardrails still hold.

- Correction playbooks. Pre-defined responses to specific failure modes rather than ad hoc debugging.

- Ownership assignment. A named human who is accountable for system behavior, not just tool output.

None of these require engineering expertise. They require system design thinking applied to AI workflows. That is a learnable skill. Most leaders have not started building it because no one named the layer that requires it.

Now it has a name.

Level 1 despite deploying systems that require Level 3 or 4 oversight.

Operator Readiness Checklist: Where to Start

Most teams try to build oversight architecture after output drift appears. By then, the system already defines the workflow, rather than the operator defining it.

These are the first actions to close the Layer-1-to-Layer-2 and Layer-2-to-Layer-3 gaps. Each takes one to four hours for an operator already running AI workflows.

Layer 1 to Layer 2 Transition

- Write down what good output looks like for your three highest-volume AI-assisted workflows. Not “accurate” – specific, measurable criteria.

- Name the human owner of each AI-assisted workflow. Ownership of tool access and ownership of output quality are different. Both need a name.

- Identify which of your current AI workflows has no feedback loop. That workflow is running at Layer 1 even if everything around it is at Layer 2.

Layer 2 to Layer 3 Transition

- Define one threshold per workflow that would trigger a review — output quality score, error type count, volume deviation. The constraint here is specificity: “accurate and professional” is not a threshold. It is a preference. Generic criteria create alert noise that teams learn to ignore within weeks. Narrow the definition until it catches real problems and ignores minor variations.

- Check when the AI model or tool you use most frequently was last updated. If you do not know, that is the diagnostic. Most operators inherit tools they did not design. The feedback loop that would surface model changes often does not exist inside the default tooling. Building it requires either output log access or a secondary review layer. Neither is free, but both cost less than discovering drift after it has shaped your outputs for three weeks.

- Name the person who owns output quality for each AI-assisted workflow. Not tool access, output quality. This is the hardest action on the list. No one wants to own quality for a system they did not build and cannot fully control. That hesitation is predictable. It is also the constraint that guarantees the oversight layer never gets built. The name does not need to be permanent. It needs to exist.

Start with the workflow where output quality matters most, and the oversight layer is weakest. That is your highest-leverage first action.

System supervision is not an engineering function. It is an operator capability that can be installed workflow by workflow. The next article shows how leaders move from Layer 1 execution to Layer 3 system supervision in five practical stages, including detecting output drift, defining thresholds, and building feedback loops that survive model updates.

The Constraint Is Not Intelligence. It Is Design.

Self-improving AI systems do not wait for organizational readiness. The evaluation loops run whether or not an oversight layer is in place to catch them. The model updates whether the team has defined what good output looks like before it changes.

Most organizations are running Layer 3 AI systems with Layer 1 management infrastructure. The gap compounds every time the system improves. Adaptation time increases. Output drift goes undetected longer. The team spends more time reacting and less time designing.

The Three Layers of AI Work framework gives you a language for the transition. The diagnostic provides your current position. The checklist gives you a first action.

The operators who close this gap first will not be the ones with the most AI tools. They will be the ones who built the oversight architecture before they needed it.

That layer is buildable right now. The constraint is design, not intelligence.

Subscribe to receive the Layer 3 implementation guide when it publishes, including guidance on designing oversight architecture for AI systems that improve themselves. StrategicAILeader.com.

Frequently Asked Questions About Self-Improving AI Systems and Leadership Readiness

What are self-improving AI systems?

Self-improving AI systems are systems that modify their own outputs through evaluation loops, reinforcement pipelines, tool use, and feedback from real environments. These systems adjust behavior after deployment rather than relying only on static training. Leaders manage a moving system, not a fixed tool.

Why are most organizations not ready for self-improving AI systems?

Most organizations still optimize task execution instead of system supervision. Teams adopt tools, improve prompts, and measure speed gains, yet they rarely define output thresholds, monitoring layers, or correction triggers. The readiness gap appears at the architecture level, not the capability level.

What is Oversight Architecture in AI leadership?

Oversight Architecture is the structural layer that defines what gets measured, which thresholds trigger intervention, and how improvement loops stay aligned with business goals. Governance sets policy. Oversight Architecture enforces behavior across changing systems.

What is System Supervision, and how is it different from AI governance?

System Supervision refers to the operator’s practice of monitoring output drift, reviewing model updates, and maintaining feedback loops across AI workflows. Governance defines rules. System Supervision maintains performance as systems evolve.

What are the Three Layers of AI Work?

The Three Layers of AI Work framework separates operator leverage into three levels:

Layer 1 focuses on task execution

Layer 2 focuses on agent delegation

Layer 3 focuses on system improvement

Most teams operate at Layer 1. Long-term leverage shifts toward Layer 3 as systems improve continuously.

Which layer should leaders focus on first?

Leaders should begin by stabilizing Layer 2 workflows, then introduce Layer 3 supervision mechanisms such as output thresholds, variance tracking, and feedback ownership. A follow-on implementation guide will outline how to design a Layer 3 supervision structure inside existing AI workflows.

Support my Writing

Want the frameworks before the articles are published? Subscribe to StrategicAILeader. Connect with me on LinkedIn or Substack for conversations, resources, and real-world examples that help.

About the Author

I’m Richard Naimy, an operator and product leader with over 20 years of experience growing platforms like Realtor.com and MyEListing.com. I work with founders and operating teams to solve complex problems at the intersection of AI, systems, and scale. I write to share real-world lessons from inside fast-moving organizations, offering practical strategies that help ambitious leaders build smarter and lead with confidence.

Explore the Strategy Library

This article is part of a broader operator framework library covering AI execution, growth systems, and revenue infrastructure.

- Case Studies

Applied AI product launches, evaluation frameworks, and deployment decisions. - AI Strategy

Model selection, guardrails, automation architecture, and enterprise AI readiness. - AI + MarTech Automation

Workflow automation across acquisition, attribution, and lifecycle systems. - Growth Strategy

Compounding revenue systems across positioning, channels, retention, and expansion. - Revenue Operations

Pipeline architecture, forecasting reliability, and GTM infrastructure. - Sales Strategy

Deal velocity, qualification frameworks, and enterprise conversion mechanics. - SEO & Digital Marketing

Search-driven growth, authority building, and technical visibility systems. - COO Ops & Systems

Execution infrastructure, process scaling, and operating cadence design. - Leadership & Team Building

Organizational alignment, hiring systems, and operator capability development. - Strategic Thinking

Decision frameworks for complex product, platform, and market environments. - Framework Visuals

Operator diagrams, system stacks, and execution maps. - Personal Journey

Lessons from building platforms, shipping AI products, and leading execution teams.

Want 1:1 strategic support?

Connect with me on LinkedIn

Read my playbooks on Substack