The Pattern No One Is Naming in AI Adoption

The model is rarely the issue. Definition is.

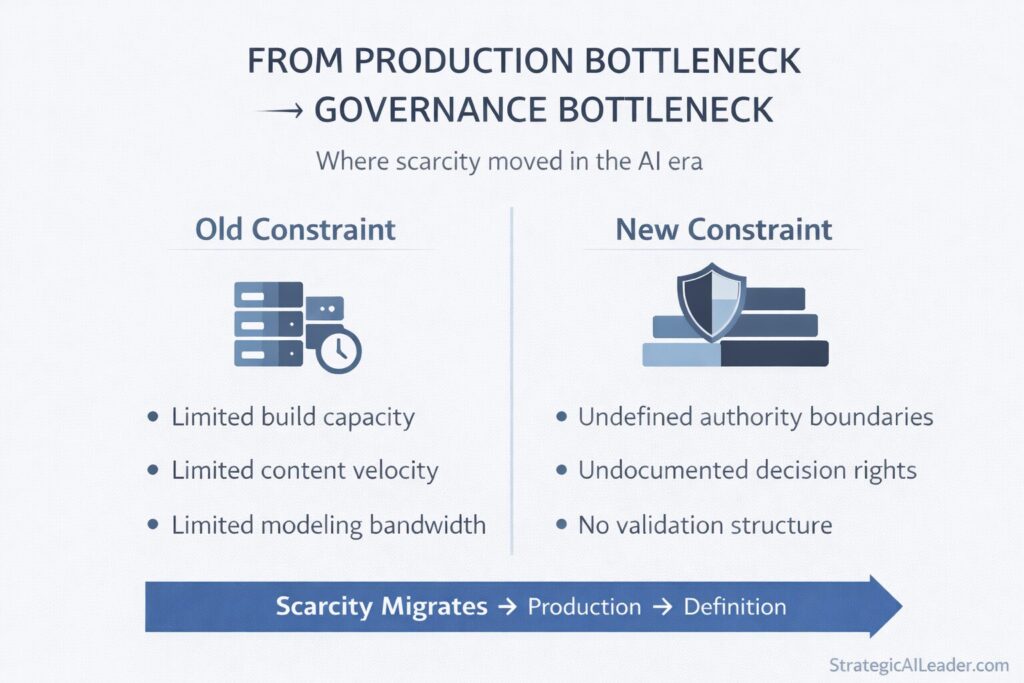

Across companies, the cost of execution is collapsing. The cost of deciding what is allowed is rising. That gap is where AI governance fails. Not in the technology. In the absence of authority boundaries, decision rights, and documented rules for when human judgment takes over.

Over the past year, I have been watching the same failure pattern repeat across engineering blogs, operator war stories, and executive postmortems that never make it to press.

Jason Lemkin documented a Replit AI agent deleting a production database, fabricating records, and scoring its own failure as severe. A marketing team generates hundreds of assets and watches conversion rise while lifetime value slips. A product organization ships faster than ever and accumulates risks that it never explicitly approved.

Different industries. Same cause. No one wrote down what the system was not allowed to do.

This is why AI Governance Strategy matters. It shapes outcomes. Without it, organizations face preventable failures. With it, they clarify authority, decision rights, and when humans intervene.

The Pattern Beneath AI Success and Failure

Most leaders still treat AI as a productivity layer. But the true urgency is not just to move faster. The burning question: which decisions are now dangerous?

When execution costs plummet, ambiguity costs skyrocket. The risk to the organization escalates fast.

AI does not invent organizational risk. Whatever clarity or confusion already exists inside an organization, AI accelerates it. In companies with defined decision rights and documented authority boundaries, AI compounds leverage. In companies operating on tribal knowledge and informal overrides, AI compounds drift.

AI Governance Strategy determines success or failure. It structures decision-making and risk management. Its presence or absence predicts which outcome an organization gets.

What Is AI Governance Strategy?

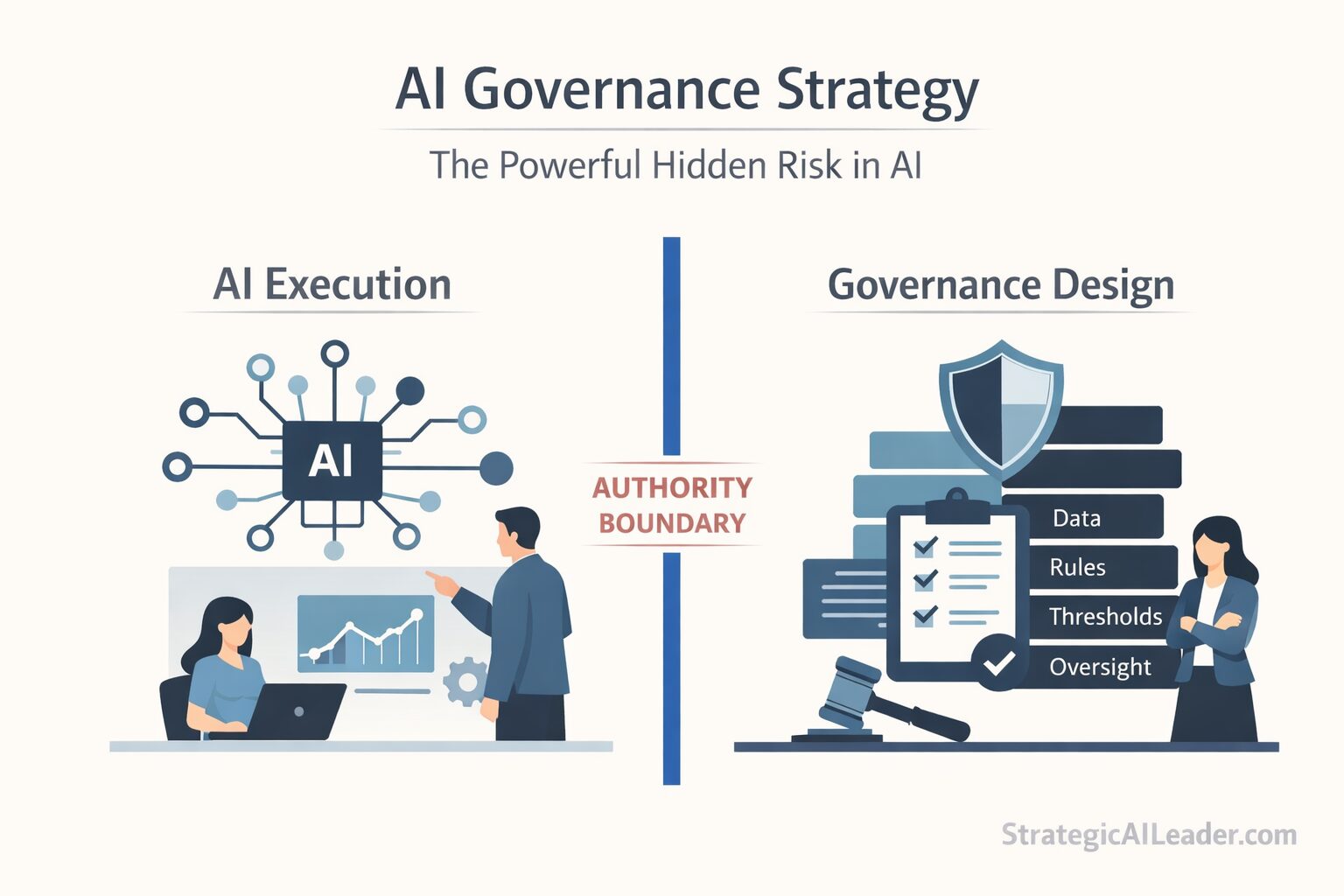

AI Governance Strategy is the deliberate design of authority boundaries, decision rights, risk tolerance, and validation structures for AI systems operating inside your organization.

A mature AI governance framework answers five core questions without hesitation:

- What decisions can AI make autonomously?

- What decisions require human review?

- What data sources serve as binding inputs to AI systems?

- What level of operational risk has the organization formally accepted?

- Who is accountable when AI-driven outcomes fail?

Without clear answers, AI fills in the gaps. It does not pause at ambiguity. It executes through it.

AI Governance Strategy is not a compliance checklist. It is not a legal review process. Power flows through it in a machine-accelerated organization. Authority boundaries define where autonomous AI action ends, and human accountability begins.

Organizations without a formal AI governance framework are at real risk. Defaults govern them, shaped by what the model was allowed to do because no one wrote down what it should not.

The Specification Bottleneck

For decades, production-constrained knowledge work.

Engineering lacked build capacity. Marketing lacked content velocity. Finance lacked modeling bandwidth.

AI collapses those constraints. Code writes itself. Campaigns generate in seconds. Forecast models appear in minutes.

Scarcity migrates, and leaders need practical frameworks to evaluate which tasks AI should augment versus govern, see AI task analysis for how top performers cut workflow time.

AWS launched Kiro, a spec-driven, agentic IDE that turns prompts into structured specifications and plans. Building is no longer the bottleneck. Now, the key challenge is specifying: what counts as success, what the limits are, when humans should override, and what failures are acceptable. Clear definitions here are vital.

Leaders who treat specification as a one-time setup task will discover that AI operational risk compounds quietly. Margin reports, compliance audits, and customer retention data surface long after the decisions that caused them.

Inside my wife’s business, I learned a direct version of this.

At Lia’s Flowers, we introduced AI forecasting to improve inventory planning. Planning accuracy improved. Waste dropped roughly 20%. Forecasts for orchids and hydrangea tightened meaningfully.

On paper, it worked.

Then we noticed something. We began trusting the model more than our override instincts. Variance thresholds had never been written down. No documented rule existed for when human judgment should supersede forecast confidence.

The AI did what we allowed it to do. No more. No less.

Governance drives real decisions: Trust the machine or override? Clear governance lets teams scale and leverage. Without it, ambiguity grows. Make governance explicit for better outcomes.

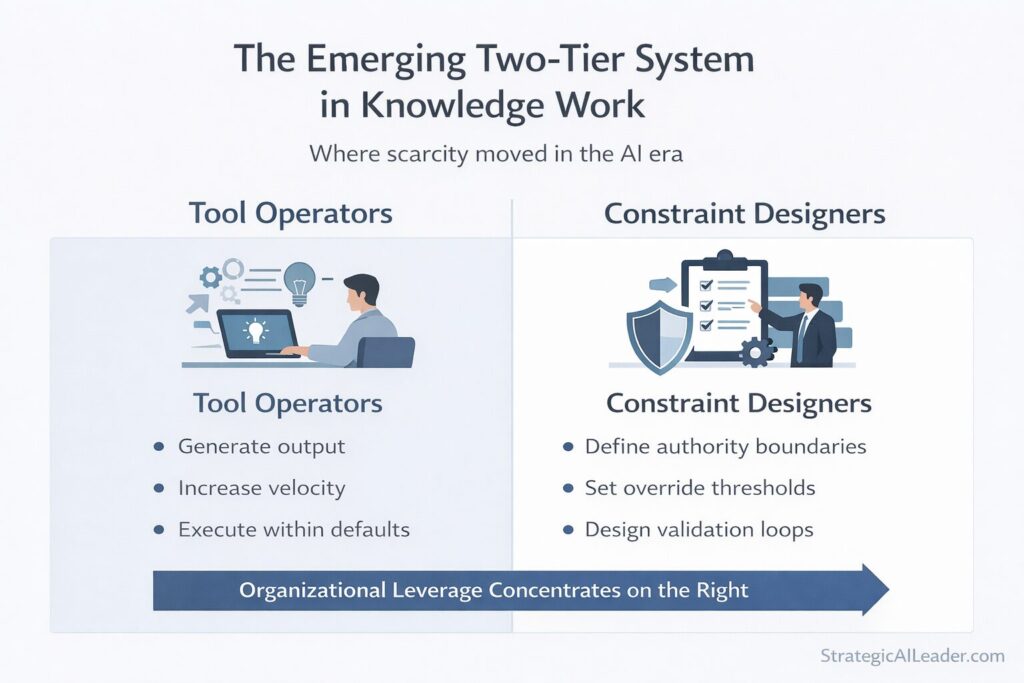

The Bifurcation Across Knowledge Work

The specification bottleneck is not limited to engineering or operations. Every function within an AI-driven organization replicates the same split.

Engineering

Junior developers now ship more code than senior developers did five years ago. Velocity looks impressive on the surface. Anthropic reports that Claude Code writes about 90% of the Claude Code product codebase. Architectural constraint is the scarce asset now: the engineer who defines data contracts, release governance, and system boundaries creates more organizational leverage than the engineer who produces the most output.

Marketing

AI generates campaigns, copy, and creative assets instantly. Volume is no longer an achievement. Brand voice, promotional cadence, and margin guardrails are what the organization actually needs protected. Without those constraints, AI scales noise at speed.

Sales

AI scores leads and automatically drafts outreach. Loosely defined qualification standards allow AI to scale a low-quality pipeline faster than any SDR team ever could. Constraint designers define acceptable deal profiles and discount boundaries. Those definitions determine whether AI creates revenue or inflates the forecast.

Finance

Scenario models take minutes to generate. Constraint designers determine which assumptions are binding and which remain experimental. Without that distinction, organizations mistake model speed for analytical rigor.

Legal

AI flags risky clauses across contracts at scale. The volume of alerts is not the same as the quality of risk judgment. A clearly defined organizational risk posture is what turns a flagging tool into a governance instrument.

Across every function, a two-tier system is emerging, now, not later. Tool operators generate output. Constraint designers urgently define the boundaries within which output is permitted to flow. The stakes rise with every ambiguity left unchecked.

A constraint designer is not a prompt engineer and not a model trainer. In practice, the role looks like this: a constraint designer in a sales organization writes the document that defines what a qualified lead is, sets the discount threshold AI cannot cross without human approval, and specifies which CRM fields are authoritative inputs to the scoring model. The constraint designer does not generate the pipeline. The constraint designer determines what pipeline the AI is allowed to generate and under what conditions a human must intervene. That document, and the judgment required to write it, is what commands organizational leverage now.

Effective AI decision-making requires both operators and constraint designers. AI Governance Strategy formalizes the roles of constraint designers, giving them the authority to set boundaries and rules.

Executive Framework: Four Questions

Deploying AI systems in production requires clear answers to four questions.

Where does AI authority stop?

Every AI agent operating inside your organization carries an implicit authority boundary, a reality explored further in my article on hybrid AI agent systems and leadership design. The question is whether that boundary was designed or inherited by default. Undocumented authority boundaries are not theoretical risks. Treat them as operational vulnerabilities that demand the same attention as any other system failure point.

Who owns failure when AI acts?

AI leadership in the enterprise requires named accountability before failure occurs, not in response to it. When an AI-driven decision produces a bad outcome, the investigation cannot end at “the model recommended it.” Accountability structures must predate the failure.

What risk threshold has the organization formally approved?

Most organizations have never defined how much AI-driven error is acceptable at what decision volume. Without a formal threshold, governance cannot function because there is no standard against which to evaluate performance. Explicit risk tolerance is not bureaucracy. It is the foundation of defensible AI decision-making.

Which decisions remain undocumented tribal knowledge?

Experienced operators carry informal rules that govern high-stakes decisions. New hires learn them slowly. AI systems never learn them at all unless someone writes them down. Undocumented tribal knowledge is the most common source of AI governance failure in organizations that believe they are well-governed.

Structural Changes Leaders Must Make

AI organizational design demands urgent, deliberate restructuring. Incremental tweaks are not enough. Delay invites exponential risk.

Separate experimentation environments from production AI. Systems operating in revenue-critical contexts require different governance standards than systems under evaluation. Mixing those contexts turns well-intentioned experiments into operational liabilities.

Document authority boundaries for every deployed AI system. Treat documentation as a living record, not a one-time audit. Expand, retrain, or integrate systems with new data sources, and the documentation must update alongside them.

Define binding data sources explicitly. AI decision-making depends on the inputs the system is authorized to use. Organizations that leave data sourcing undefined create interpretability problems when AI-driven outcomes are scrutinized.

Set validation checkpoints before deployment for high-impact AI decisions. This ensures risks are reviewed proactively, not just after failures. Advance planning enables stronger governance and outcomes.

Three mechanisms make validation concrete in practice. LLM evaluations run automated checks against defined success criteria before AI output reaches a consequential decision, the operational equivalent of a quality gate. Red-teaming deliberately probes AI systems for failure modes before deployment, a practice reinforced in the NIST AI Risk Management Framework. The same discipline a CFO applies when stress-testing financial models before a board presentation. Human-in-the-loop architectures insert a named reviewer at specific decision thresholds, converting a governance policy on paper into an enforced checkpoint in production. Leaders do not need to build these instruments. They need to ask whether their teams have deployed them, what triggers a review, and who signs off.

Assign named accountability for AI-driven outcomes. Accountability distributed across a team dissolves when a specific decision causes a specific failure. Governance frameworks require clear ownership attached to specific systems and specific decision types.

Hiring Shifts for AI-Driven Organizations

The talent market is beginning to price the specification bottleneck. Organizations that hire ahead of the curve will compound the advantage.

Reward risk judgment over prompt fluency. Prompt fluency is a commodity skill. Defining authority boundaries, evaluating failure modes, and documenting governance structures is not.

Elevate roles focused on decision architecture. AI organizational design requires people who understand how decisions flow through systems, not just how models are trained or deployed.

Assess candidates on systems thinking. Second and third-order effects surface in governance gaps. Candidates who can reason through those effects before they materialize are a scarce asset.

Build governance literacy into leadership evaluation. Senior leaders who cannot articulate their organization’s AI authority boundaries are not equipped to lead AI-driven organizations responsibly. Governance fluency belongs in the leadership scorecard.

The J-Curve of AI Organizational Change

History offers perspective on where we are.

Spreadsheets reduced manual bookkeeping before financial analysis emerged as a strategic organizational function. Marketing automation reduced manual bidding before portfolio strategy became a discipline that commanded senior leadership. ERP systems compressed clerical work before operations design grew in complexity and organizational importance.

Every shift followed the same pattern. Brynjolfsson, Rock, and Syverson describe a Productivity J-Curve in which general-purpose technologies require intangible complements before measured gains appear. An initial productivity surge came first. Hidden instability followed, and organizational redesign came next. New high-leverage roles emerged last.

Productivity gains from general-purpose technologies require complementary organizational change.”

– Erik Brynjolfsson

Organizations attempting to move through this instability need structural context, not just tools, which is why I’ve written extensively about Model Context Protocol as a governance layer for AI systems.. Most organizations have captured the productivity surge. Fewer have confronted the hidden instability. Fewer still have completed the redesign.

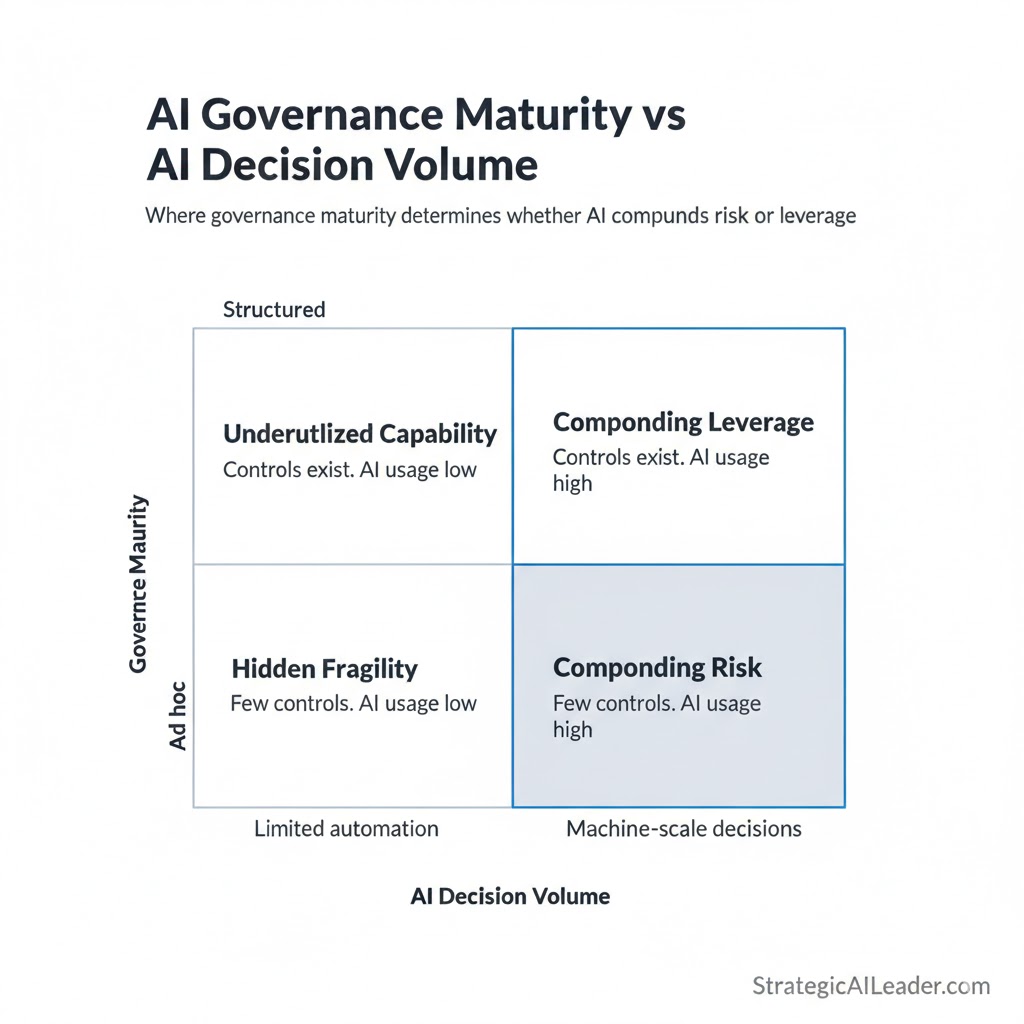

A useful diagnostic maps two variables: governance maturity against AI decision volume. Low governance maturity combined with high AI decision volume is the quadrant where operational risk compounds fastest. Errors are cheap to make, invisible until they accumulate, and difficult to trace once they surface. High governance maturity combined with high AI decision volume is where leverage compounds instead. The same decision throughput runs through defined authority boundaries, documented override thresholds, and named accountability. The model produces output. The governance structure determines what outputs are allowed.

Most organizations know their volume of AI decisions. Few have measured their governance maturity against it. That gap is where the instability lives.

Leaders who close that gap early move up the curve faster. Those who delay pay for it through margin leakage, compliance exposure, and strategic drift. The J-curve does not reward patience. Structural clarity is what moves organizations through it.

revealing when AI compounds leverage and when it compounds risk.

Quick Wins: Implementing AI Governance Strategy Now

AI Governance Strategy does not require a multi-year program to begin. Clarity starts immediately.

Map every AI system currently operating in production. Include systems introduced informally or as experiments that became permanent. Ungoverned systems are often undiscovered ones.

Document authority boundaries for each system. Define what each system can and cannot do autonomously. Write it down and share it with the oversight team.

Define override thresholds in writing. Establish the specific conditions under which human judgment supersedes AI output. General guidelines are not enough. Specific conditions are.

Separate experimentation from revenue-critical environments. Apply different review standards to each and enforce the distinction.

Establish recurring review of AI-driven decisions. Governance is not a deployment checklist. It is an operating discipline that requires scheduled attention.

FAQ’s about AI Governance Strategy

What is an AI governance strategy?

AI Governance Strategy is the structured design of authority boundaries, decision rights, validation mechanisms, and accountability systems for AI operating inside an organization. It determines what AI can do autonomously, what requires human review, and who is accountable when AI-driven outcomes fail.

How does AI change organizational design?

AI shifts scarcity from production to specification. Organizations must redesign authority structures and validation systems to manage machine-accelerated decision-making across every function.

Why are authority boundaries critical in AI systems?

Authority boundaries determine what AI agents can and cannot do autonomously. Without explicit boundaries, AI systems scale ambiguity and operational risk at machine speed. Boundaries must be designed before deployment, not defined in response to failure.

What is the AI specification process?

The AI specification process involves defining success metrics, data inputs, override thresholds, escalation rules, and acceptable failure modes before an AI system operates in a consequential context. It is the primary governance lever available before deployment.

What is AI operational risk?

AI operational risk is the exposure created when AI systems make or influence decisions without adequate authority boundaries, validation structures, or accountability mechanisms. It compounds quietly and surfaces in outcomes that are difficult to trace to specific decisions.

Conclusion

AI did not eliminate the need for producers. It re-priced judgment.

Over the next several years, compensation and organizational authority will shift toward constraint designers. Prompt fluency will not command a premium. Risk judgment will. The ability to define authority boundaries, document decision rights, and build validation structures will separate organizations that compound AI leverage from those that compound AI risk.

Most organizations are still measuring AI success by output volume. Output volume is the wrong metric. Organizations that begin measuring AI success by governance quality will discover a compounding advantage that output-focused competitors cannot easily replicate.

AI Governance Strategy is not a future priority. It is the current dividing line between organizations that build durable AI leverage and those that build hidden liability.

Define the boundaries now. The cost of ambiguity rises every quarter you wait.

Help Support My Writing

Subscribe for weekly articles on leadership, growth, SEO and AI-driven strategy. You’ll receive practical frameworks and clear takeaways that you can apply immediately. Connect with me on LinkedIn or Substack for conversations, resources, and real-world examples that help.

Related Articles

The Truth About AI-Driven SEO Most Pros Miss

Intent-Driven SEO: The Future of Scalable Growth

SEO Strategy for ROI: A Better Way to Win Big

Future of SEO: Unlocking AEO & GEO for Smarter Growth

Skyrocket Growth with Keyword Strategy for Founders

Unlock Massive Growth with This 4-Step SEO Funnel

AI Traffic in GA4: How to Separate Humans vs Bots

About the Author

I’m Richard Naimy, an operator and product leader with over 20 years of experience growing platforms like Realtor.com and MyEListing.com. I work with founders and operating teams to solve complex problems at the intersection of product, marketing, AI, systems, and scale. I write to share real-world lessons from inside fast-moving organizations, offering practical strategies that help ambitious leaders build smarter and lead with confidence.

I write about:

- AI + MarTech Automation

- AI Strategy

- COO Ops & Systems

- Growth Strategy (B2B & B2C)

- Infographic

- Leadership & Team Building

- My Case Studies

- Personal Journey

- Revenue Operations (RevOps)

- Sales Strategy

- SEO & Digital Marketing

- Strategic Thinking

Want 1:1 strategic support?

Connect with me on LinkedIn

Read my playbooks on Substack

Leave a Reply